What Our MaRS Application Taught Me About AI Pre-Screening

We applied to MaRS Discovery District and went through its intake process, only to learn on the screening call that our company was not the kind of fit they were looking for. The issue wasn't the rejection. It was that the likely outcome could have been identified much earlier from the written application.

By Oscar ONeill, Founder of DigitalStaff.ca

The Short Version

We applied to MaRS Discovery District because, based on its public positioning, it seemed like the kind of organization that supports ambitious Canadian science and technology companies as they grow.

We completed the written application, waited for review, prepared for the next step, and joined the screening call. Early in that conversation, it became clear that our company was not the kind of fit they were looking for.

That outcome is completely fair. Not every business is the right fit for every program.

But the experience highlighted something important: the information that appeared to drive the decision had already been disclosed in the application. If that mismatch could have been surfaced earlier, both sides could have saved time.

That is the real point of this post.

Why We Applied

MaRS publicly presents itself as a major part of Canada’s startup ecosystem and invites founders to apply for support. Its website says it helps science and tech companies scale globally, and its broader messaging emphasizes support across stages of growth.

From our perspective, that sounded promising.

DigitalStaff is an AI and automation company. We build systems that reduce manual work, connect disconnected software, and automate repetitive back-office workflows. Based on the public-facing language, it seemed reasonable to think we were at least worth screening.

So we applied.

What Happened

The process itself was simple.

We completed the written application, waited for the next step, prepared for the screening call, and joined the meeting.

During that call, it became clear that our business was not aligned with what they were looking for.

Again, the rejection is not the issue. The issue is that the factors discussed on the call were not new discoveries. They were already present in the written application.

So the operational question becomes:

Why did it take a live screening call to surface a likely mismatch that may have been identifiable earlier?

The Process Gap

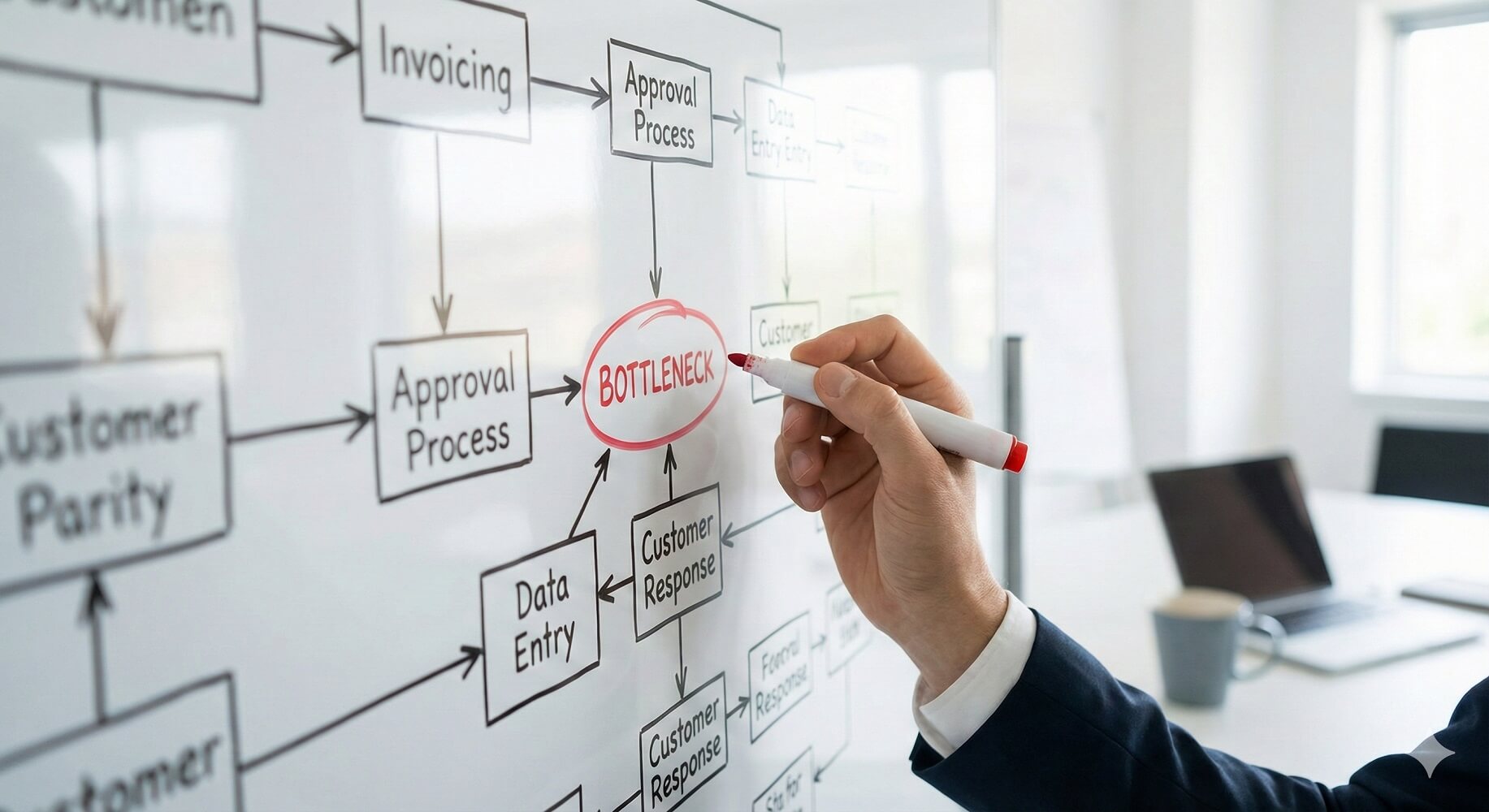

This kind of gap shows up in organizations everywhere.

Information comes in through a form. A human reviews it. A next step gets scheduled. More time is spent. Then later, someone realizes there was an obvious fit issue from the start.

That creates avoidable friction on both sides. The applicant invests time preparing for a conversation that was unlikely to change the outcome, and the internal team spends time reviewing, scheduling, and attending a call that may not have been necessary.

This does not require bad intent. It is simply what happens when intake and qualification rely too heavily on manual review.

How AI Could Improve This

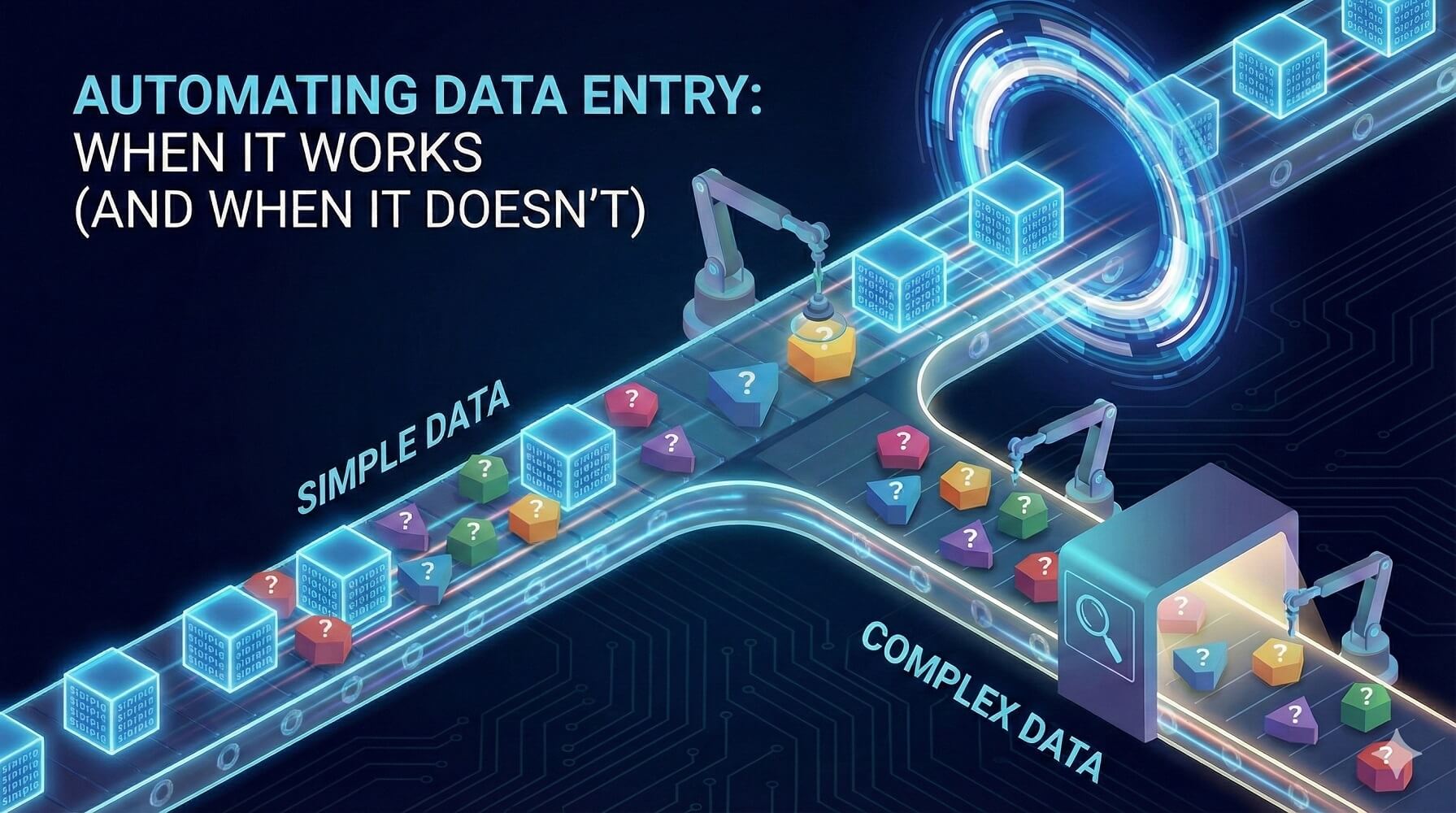

The fix is not to remove humans from the process. The fix is to use AI where AI is strongest: spotting patterns, summarizing inputs, and flagging likely mismatches early.

A better intake process could work like this:

1. AI reviews the written application immediately

As soon as an application is submitted, an AI layer reviews it against the organization’s real operating criteria: business model, product versus service mix, sector fit, traction, team structure, and program suitability.

In our case, the system may have flagged a possible fit issue right away.

2. The applicant receives a clarification request

Instead of going straight to a call, the applicant receives a short follow-up asking for clarification on the specific areas that may not align.

That gives the applicant a chance to add context, and it avoids making the screening call the first moment of real qualification.

3. Internal staff receive a structured summary

At the same time, the internal team gets an AI-prepared brief explaining what the applicant does, where the fit questions are, and what still needs clarification.

That makes the human review faster and more consistent.

4. Humans still make the final call

This part matters. AI should support the process, not replace judgment.

The human still decides whether the company is a fit. AI simply helps surface likely issues earlier, so people spend their time where it matters most.

Why This Matters

This may sound like a small issue, but these small intake inefficiencies compound across applications, lead qualification, vendor review, client onboarding, and support triage.

A few wasted hours here and there become real operating cost.

The opportunity is not just speed. It is earlier clarity, better alignment, and a more respectful process for everyone involved.

Where DigitalStaff Fits In

This is the kind of operational problem we solve at DigitalStaff.

We help organizations reduce manual review work, improve qualification flows, connect their systems, and automate the repetitive steps that slow down growth.

The pattern is simple:

Information comes in. Someone has to interpret it. A decision has to be made. Too much of that process is slower and more manual than it needs to be.

That is where well-designed AI and automation can help.

Final Thought

MaRS had every right to decide we were not the right fit.

I respect that.

But the experience still revealed a useful lesson: if the likely outcome of a review can be inferred from the written application, then the process should be designed to surface that earlier.

That is not just a startup-program issue.

It is a universal operations issue.

And it is exactly the sort of thing AI is good at improving.

Oscar ONeill is the founder of DigitalStaff.ca, an AI and automation company based in London, Ontario. DigitalStaff helps growing organizations reduce manual work, improve workflows, and connect the systems that run their operations.